I’ve always loved music – as do both of my parents. They have excellent, but divergent tastes in music. With my Mum I share a love of Sandy Denny, Jeff Lynne and George Harrison, with my father there was a shared affection for Eric Coates, Henry Hall and G. F. Handel. And when you mix the two together you get my love of Maestoso, Mike Oldfield, Kevin Ayers and Barclay James Harvest.

As well as listening to music, I also enjoy making it. But I always thought making a music on a computer seemed so difficult to do I never bothered really trying.

However, recently I got a bit of inspiration from my friend TA Walker (Tim). Earlier this year Tim signed up for something called the 5090 Challenge – writing 50 songs in 90 days. Given Tim has a full-time job, a wife and a young daughter that was insanely ambitious but astoundingly he managed 36 excellent songs which I have been known to raid for my YouTube videos. In order to reach his goal Tim was making music anywhere using anything – he was even overdubbing vocals and recording guitalele in his car during his lunch-breaks using an iPod Touch. Here is Tim playing one of his 5090 songs:

So, if Tim could make music in a car (or on a very nice looking white leather sofa) I had no excuse sitting in front of a computer that had access to a repository of free software for making noises.

I’m using Fedora 17 and I wanted to try and record music entirely using free software. This is because a) I’m on a budget of £0 and b) I think it’s the right thing to do.

|

| Rosegarden running on Fedora 17 |

The first program I tried to install was something called Rosegarden. It seemed a pretty welcoming program for beginners as music programs go and therefore a good place to start. It used staves and notes – things that a dinosaur like me can (almost!) understand. However before I could get Rosegarden to make any noise I needed a synthesiser. I don’t have a real synthesiser, so instead I needed a soft synthesiser – a program that runs on the computer and pretends it’s a real synthesiser sitting on your table.

The synthesiser that everyone seemed to recommend was something called FluidSynth, so I thought I’d install that. FluidSynth is a free software synthesiser that can take MIDI data from a program like Rosegarden and turn it into audio.

It normally comes with a “SoundFont” bank containing a nice range of sounds for a beginner, so it seemed a good start. However to use FluidSynth it’s best to have a nice graphical interface so you can fiddle with it using knobs and buttons on your desktop. The most common one is called QSynth. It looks very impressive!

|

| A very impressive addition to any desktop! |

Only, before I could use the virtual synthesiser I needed something to plug it into the computer’s sound hardware. In other words, FluidSynth needs somewhere to send all this audio it’s creating. That somewhere is a piece of software called the JACK Audio Connection Kit (JACK). But before I could use JACK I thought I’d find it easier if I something graphical to could control JACK with. So I needed something called QJackCtl – a graphical JACK Control panel.

|

| QJackCtl with JACK not started |

So I downloaded all the bits I needed. I had Rosegarden (a music studio), Fluid Synth (a synthesiser), JACK (a sound server), QJackCtl (a graphical interface for JACK) and QSynth (a graphical interface for FluidSynth). It was, literally, like a house that JACK built.

Now I tried to make a noise. I worked out after a couple of minutes that it’s not enough to simply load QJackCtl – JACK has to be started and stopped by pressing the Start and Stop buttons. So I tried to start JACK and it did nothing but spit error messages at me and I certainly couldn’t get anything to make any sound.

Now, this is where the cutting-edgeness of Fedora had just bitten me on the bum. Normally you should be able to start JACK and it will work without error. And indeed, since this morning’s software repository updates that’s exactly what it does do. However at that time there was a permissions problem within Fedora so I needed to type:

su -c "modprobe snd-seq-midi"

It took me an hour or so to find that out, and before I did so I couldn’t start JACK or make any noise at all. Normally I would have given up long before this point, but with M4 and Mr Cable The Sysadmin ringing in my ears I was determined and pressed on.

There were a couple of other things I had to do in JACK to get it to work. After pressing the Setup… button I had to uncheck Realtime, check Force 16bit and change the interface to hw:0.

|

| QJackCtl with JACK started |

With JACK running happily, I started QSynth to get FluidSynth running. Everything seemed OK, so the next step was to run Rosegarden. No problems. I opened one of the examples in the examples folder, pressed the play button and success! Music!

However, music on my headphones only – there was nothing coming out of my speakers. I went to QJackCtl and pressed the Connect button to see what was going on.

|

| QSynth in headphone-only mode |

As you can see, the left output of QSynth (l_00) was going to my system’s playback_1 and the right output of QSynth (r_00) was going to my system’s playback_2. This was giving me music in my headphones. However, what were the other playbacks?

|

| QSynth will now use my speakers too |

I tried to connect the left output of QSynth (l_00) to playback_3 and the right ouput (r_00) to playback_4, and it worked. Music through my speakers!

So every time I want to make music I…

- load QJackCtl,

- start JACK by pressing the Start button,

- load QSynth

- then load Rosegarden

…always in that order.

Provided I just wanted to enter musical notation into Rosegarden I was now fine, but that’s not much fun. The frustrated Woolly Wolstenholme in me wanted to have a keyboard to play!

The trouble was as well as not having a synthesiser, I don’t have a keyboard either. Fortunately there are “virtual keyboards” available that allow you to play music using your computer’s keyboard. The one I chose out of a field of three was called Virtual MIDI Piano Keyboard (VMPK). I chose this one because it was the only one that seemed able to play nicely with the Hungarian keyboard on my computer.

|

| Be Woolly in the comfort of your own home… |

However, in order to record MIDI data created with a virtual keyboard meant I had to plug it into something that records MIDI data – Rosegarden. It was back to the QJackCtl Connect dialog:

|

| VMPK running, but QJackCtl shows nothing to plug it into |

VMPK had appeared in the MIDI tab of the QJackCtl Connect dialog. The trouble was, nothing else did – the only thing I could plug my virtual keyboard into was itself.

This proved to be a very tricky problem to sort out. It took me a long time to find an answer but the answer was a program called a2jmidid. Apparently there are two kinds of MIDI on a GNU/Linux machine – ALSA MIDI and JACK MIDI. They can’t talk to each other without a “bridge” program. The bridge is called a2jmidid and it’s available in the Fedora repository. To use it I had to start a terminal window and type:

a2jmidid

Then, provided I kept the terminal window open, when I go back to my QJackCtl Connect dialog I get some extra things in the MIDI tab:

|

| VMPK connected to Rosegarden in QJackCtl |

As you can see, I can now connect the VMPK Output to the Rosegarden record in and, hey bingo, I’ve got a MIDI keyboard connected to Rosegarden.

|

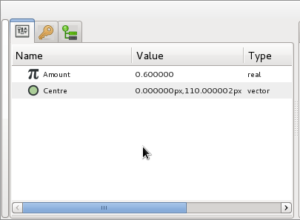

| VMPK configured for a Magyar keyboard |

The only thing left to do with VMPK is create a Hungarian key mapping – this was very easy to do using a dialog provided by the program.

The first thing I wanted to try and record was a tune I remembered from my childhood. It was an early music or baroque piece for recorder and a small ensemble used by the Open University before their morning broadcasts. I have never heard since early mornings in the 1980s when I used to get up early to watch a maths foundation course on calculus or the foundation course on modern art.

|

| A lost childhood memory |

I did a rather twee arrangement using a harpsichord, viola, cello and a recorder. I think the real thing was less Bach and more Henry VIII.

However when I came to play it the recorder just didn’t sound right. It sounded very good, but it didn’t sound like the recorder I had in my head. So I looked on-line to see if there were any other SoundFont banks I could use with QSynth.

I was in luck because the pianist and composer S. Christian Collins has put together an excellent SoundFont for bank for QSynth and put it on his website here. It’s called the GeneralUser GS Soundfont bank.

|

| GeneralUser GS Soundfont bank loaded into QSynth |

To load it I had to get QSynth running and press Setup…. Next, I had to go to the Soundfonts tab and replace the default soundfont bank (an sf2 file) with the GeneralUser GS SoundFont bank I had downloaded.

To my delight the recorder sounded much more how I wanted it to sound.

So now I had finished and was happy with my sounds I realised I needed some way of recording what I’d just done as an audio file instead of a Rosegarden file.

When I ran QJackCtl with nothing else running the Connect dialog looked like this:

|

| By default I can only get sound from the microphone |

If you look at the Readable Clients box on the left you’ll see the only place I could get any audio is from capture_1 and capture_2. They represent the microphone socket on my computer. capture_1 is the left channel and capture_2 is the right channel of the stereo microphone input.

If I ran Rosegarden I found they were connected automatically to Rosegarden’s record in 1 L and record in 1 R Input Ports:

|

| Rosegarden connects to the microphone automatically |

I looked in the QJackCtl Setup dialog and saw a Monitor check box which was unchecked. It sounded like what I needed so I checked it.

|

| However you can enable monitoring |

When I restarted JACK I saw this:

|

| And route the monitor output where you want it |

So now I have monitor output in addition to microphone input as a potential source of audio. What monitor output means is that I can record whatever I can hear through the speakers. This is just what I needed.

|

| Such as here, where the monitor output is routed to Rosegarden |

I started Rosegarden up again and connected up monitor_1 and monitor_2 to record_in_2 L and record_in_2_R.

This meant that now Rosegarden had the system’s sound output available as a source of audio. Now I could use Rosegarden to record whatever Rosegarden was playing as an audio file!

|

| You can easily turn the metronome off in Rosegarden |

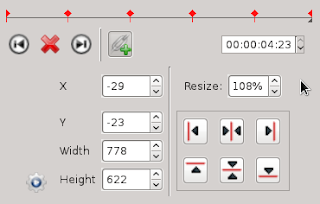

Setting this up in Rosegarden is quite easy and pretty logical once you work it out (which took me a long time!). The first thing you need to do is go to Studio-> Manage Metronome to turn off the click track. You usually don’t want that on your master recordings!

The next thing you need is an audio track that can accept the monitor output as its audio input:

|

| Rosegarden all set up to record Rosegarden |

You can see in the picture above I’ve set track 17 as my current track. It’s an audio track and I’ve called it RECORD.

On the left hand side you’ll notice that I’ve set the In: to In2. This is very important – In2 is the Rosegarden input we connected up to the monitor output in QJackCtl earlier. Never use In1 – it’s quiet and full of interference noise!

Finally you’ll notice I’ve armed track 17 to record – shown by the red light to the left of the track name. Now when I press the record button the my Rosegarden file will play and be recorded as an audio file on track 17 at the same time.

|

| My recorded Rosegarden output in Rosegarden |

When the track has finished you will see the waveform displayed in your recording track as it is above.

|

| Double-clicking on an audio track segment in Rosegarden opens Audacity |

Now you can double click on the recorded segment and it will open in Audacity. Don’t forget to set Audacity to JACK Audio Output as I have in the picture above, or it will freeze and not play. From Audacity you can edit or export the audio in the usual way.

|

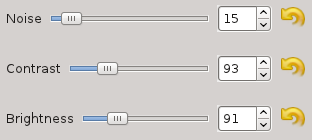

| For OGG Vorbis files or MP3 files I normalize to -2.0dB |

I always save a lossless FLAC file from Audacity first. If I want to save to a lossy format such as OGG Vorbis or MP3 I always Normalize to -2.0 dB first before I export.

Being able to set Audacity to use JACK audio output is very handy – particularly if you find you want to listen to audio files while you are working.

So now I had a FLAC file, an Ogg Vorbis file and an mp3 file. The FLAC file was fine, but what I really wanted to do was get a picture in my mp3 and ogg files so they would be just like the ones I downloaded from TA Walker’s Bandcamp page.

To do this I found an excellent program called EasyTAG which does exactly what it’s name suggests. This program allows you to add a picture to your audio files and is very easy to use. Although I tend to use Ex Falso for most of my tagging (it’s better for classical music) I’ll use EasyTAG for tagging my own files in future.

The next thing I decided to do was re-record the OU Tune in Mike Oldfield style. When I was a child I remember watching Simon Groom visit Mike Oldfield to see him re-record the Blue Peter signature tune. That video had a enormous effect on me as a child and recording something like that was something I always wanted to try.

I had a lot of fun in Rosegarden pretending to be Mike – particularly tapping away on my computer’s keyboard pretending to play the bodhrán.

When I finished Tim very kindly recorded a real electric guitar solo for me to add to my track. He supplied it to me as a FLAC file, but the funny thing was I could not find any way of importing a FLAC file into Rosegarden – only .WAV files.

|

| TA Walker’s solo shown on the red track |

However, by accident, I discovered you could import FLAC files directly into Rosegarden if you dragged and dropped them onto the time track.

I’d enjoyed myself so much with the Open Universtiy tune I decided to record another tune Mike Oldfield-stylee, so I dusted off my recording of Border Television’s Keltic Prelude March by L. E. DeFrancesco and did that as well!

The reason I did the Keltic Prelude March was so that I could upload my video of a Border Television start-up that I had pulled down earlier this year. The reason I had pulled it down was because of a copyright claim over the track Holiday People by James Clarke that I had used over the menu rundown. Therefore I decided I would also create a pastiche of Holiday People to use in my Border start-up. I came up with a tune I called Lionel’s Talking!

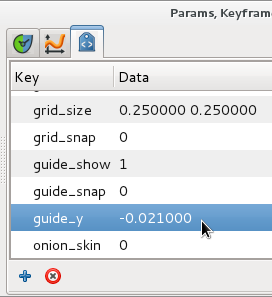

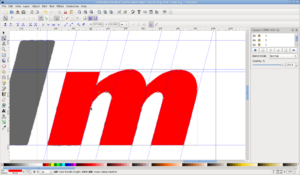

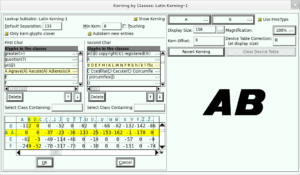

|

| Lionel’s Talking in Hydrogen |

I needed a “real” drum kit for Lionel’s Talking so I decided to use a special drum synthesiser called Hydrogen which does the job flawlessly. Hydrogen also works beautifully in tandem with Rosegarden. The Rosegarden website has a wonderful tutorial to explain how to do this here.

So put it all together and what do you have? Well something like this…

Producing music on GNU/Linux can be a bewildering and frustrating experience at first. There are so many things you need – each one has to be set up correctly in order to work properly. There is a huge amount to learn and sometimes you feel the easiest thing is to just give up. I spent a lot of time trying, and failing, to do things which I thought should have been easy.

In addition, differences in hardware mean what you have to do get everything working is slightly different for everyone.

But with a little perseverance you find that things rapidly begin to make sense, there is a common logic that underlies all the things you have to do and you begin working out answers to your problems yourself.

I hope you try making some music too.